But there is a yet more weighty objection to hedonism of any kind: the so-called “experience machine.” Imagine that I have a machine that I could plug you into for the rest of your life. This machine would give you experiences of whatever kind you thought most valuable or enjoyable—writing a great novel, bringing about world peace, attending an early Rolling Stones’ gig. You would not know you were on the machine, and there is no worry about its breaking down or whatever. Would you plug in? Would it be wise, from the point of your own well-being, to do so? Robert Nozick thinks it would be a big mistake to plug in: “We want to do certain things . . . we want to be a certain way . . . plugging into an experience machine limits us to a man-made reality.”

—“Well-Being” entry in the Stanford Encyclopedia of Philosophy

Every month or so, a new advance in virtual reality is publicized, usually starting with technology publications and eventually making its way to mainstream newspapers and cable television. The stories are usually written by technology reporters, who would go on to evaluate the devices with optimism. The reports give emerging technologies the dignity a toddler gives to a new tablet, with the same sense of wonder and discovery.

Writers in other fields portray research and development labs as creating a different version of this future; fictionalized portrayals of the technology frame the technology as a potent and dangerous escapism. An oft-expressed virtual reality anxiety is the complete detachment of the user, who is trapped in a permanent illusion. There is nothing new about this fear; it descends from past fears of constructed realities present since Plato’s allegory of the cave. What both cohorts fail to see is what virtual reality is today: an audiovisual device in the same way that a television or a smartphone are audiovisual devices.

Virtual reality headsets today are banal: in consumer applications, they are chunky helmets with curved screens and speakers that blare into the user’s ears. A VR game does not pose the danger its successors might: it uses immersion to provide mere entertainment and not necessarily deliver the user from his reality. Today, the technology is neither where fiction writers fear it is, nor where technology reporters hope it is. However, it is advancing with enough velocity to merit further examination.

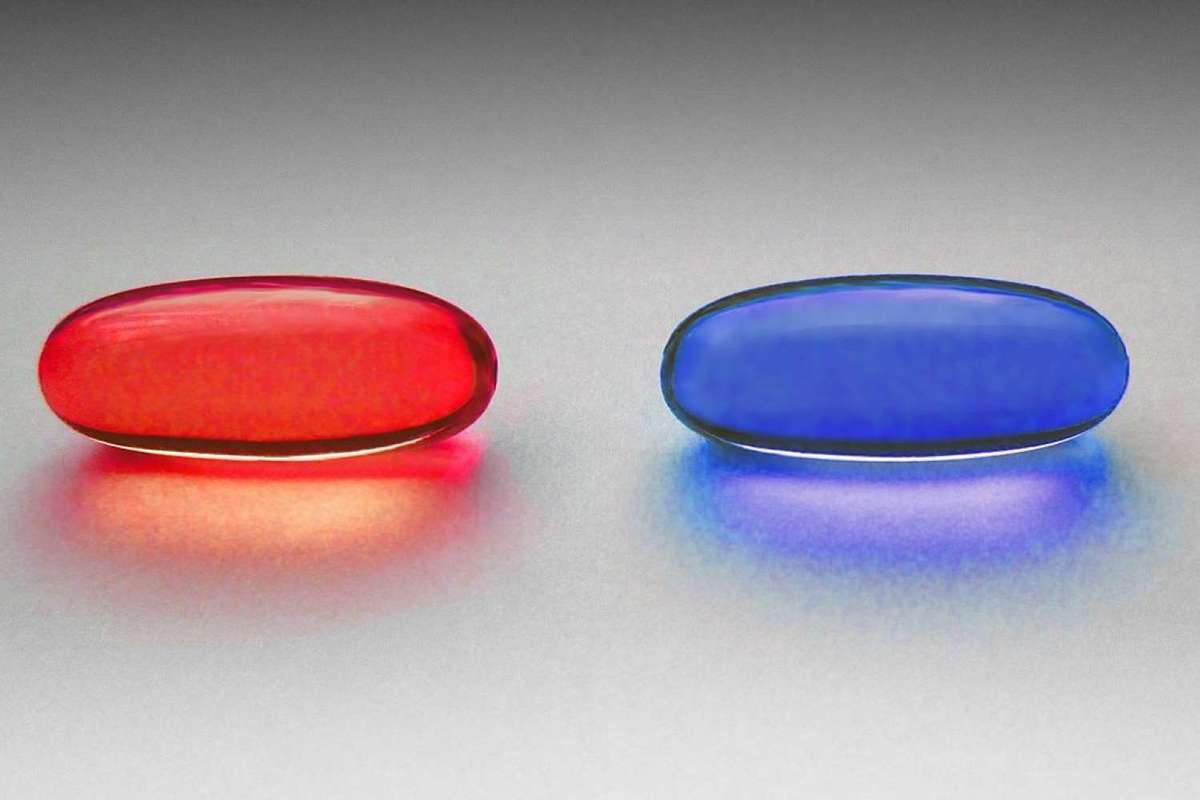

The mere idea that people can check out of this world has always been concerning to man. Given that the mediums of communication have changed, the idea itself took different forms. The fabled dissociations started with shadow play in a cave to evolve into what we foresee in virtual reality’s near future. One of the most popular recent examples is the Matrix’s Red and Blue pills, which predictably ended in “reality” winning, insinuating a consensus that a bitter reality is a syrup we should always take. This moral consciousness protects us from the danger of being controlled by technology. If we were in Neo’s shoes, we—and most of our ancestors—would make Neo’s decision. The motives of the machine are then clear to us: it is meant to dissociate us from reality, an intent we elected to deem evil.

We need to look then at the cases in which virtual reality promises more than the amusement it is currently providing. The VR-boogeymen are not the mainstream gaming and commercial applications trending today. Rather, they are technologies that specifically target the emotionally vulnerable: their methods are sophisticated and psychologically impactful because they—much like other forms of escapist media—dig into sensations of grief and bereavement. In a default, fully aware mental state, most moral judgments will lead to a rejection of fake worlds. The most compelling uses of the “trapped in a simulation” trope often frame virtual reality as a “last resort”, or a gentler death for people whose lives have become too unbearable.

Because of virtual reality’s historic connection to gaming, scientists have conducted research in the intersection of gaming, cognitive psychology, and virtual reality. In Boundaries of the Self and Reality Online, Dr. Joan Preston associated individuals with high willingness to participate in VR with what she calls a higher absorption: there is more brain activity among the willing, which leads to increases in their “creativity, hypnotizability . . . and sensitivity”. In the same essay collection, Drs. Angelica Ortiz de Gortari and Mark Griffiths explore the links between video games and perception. People who have been playing video games have had more experiences in which their cognition is affected: 65% of surveyed people had misinterpreted a sound in real life with something from a video game. Furthermore, their research also discussed various individual experiences of “detachment from the objective reality, particularly among individuals with dissociative tendencies or symptoms”. They go further by describing extreme situations in which certain gamers have “lost contact with reality”.

While the second article mentioned veers into the qualitative, it indicates that the technology is significant: a particularly willing user of virtual reality is more susceptible to have their cognition altered and possibly reach complete dissociation. What we then have on our hands is a hypothetical tool that could profoundly change the user’s psyche: they might truly come to believe that the characters they see in an alternate reality to be real—especially if willing to do so. Specific applications have harnessed that power, and are proposed in many therapeutic settings—such as assisting individuals with neurological disorders. These specific applications make the use of VR useful within assistive technology, rather than a completely novel use-case. Other engineers are more ambitious in their objectives for virtual reality: they want to use it to assist in forms of therapy, such as cases of grief, depression, or anxiety.

One of the most recent examples of these use-cases is Meeting You, a VR reunion of mother with her deceased 7 year old daughter. A Korean studio created a digital avatar of the daughter, gave the mother a VR headset, and started the program. The mother—despite being fully aware that the daughter is an avatar—was still profoundly touched by the moment. The developers of the program were adamant about distinguishing between “remembering” and “recreating” the daughter, which meant they recognized a universal taboo that comes with the attempt of representing the dead.

They understood themselves as engineers and scientists who did not want to be the evil geniuses; they rather wanted to “humanize” technology and make it less “cold.” In short, no one wanted to be the liar, and no one wanted to be lied to—much in line with the moral sensitivities we discussed earlier. While the specific justification was provided in the context of a documentary TV interview, it still says that the studio understands what the public wants in their technology. Despite everyone on set being in on the joke, the episode also shows a mind-altering potency other previous technology did not possess.

While it is inappropriate to assess others’ bereavement, the mother was less vulnerable than someone who only recently faced the death of a relative. The episode wanted to show someone who healthily responded to the issue and prove that the technology itself was not evil. Most fiction and science-fiction writers have not been as charitable as technology reporters are. They like to intentionally give inventors personalities that are particularly effective in creating dystopias: misguided good motives or a moral aloofness that becomes indiscriminately evil. Many of these sinister stories successfully fed into viewers’ fears of a familiar world in which pervasive technologies run rampant.

Black Mirror, for example, has made a brand out of being a near-future science dystopia set to dreary English skies. The most VR-adjacent episode is its second season premiere: “Be Right Back.” While the technology itself is not virtual reality, its selling points are identical to those of the Korean technology: it allows reunification with a dead boyfriend. The initial mention of the technology at hand was at a funeral—when the girlfriend was particularly vulnerable: a friend mentioned “resuscitation” software, only to be met by the girlfriend’s protest. The resurrection would have been a form of desecration: it would be reanimating a corpse and only keeping the body. The friend still went ahead and enrolled the girlfriend into the program. The girlfriend—again echoing our own conclusions—is outraged but eventually falls to the temptation of reconnecting with her boyfriend through his clone.

The journey begins smoothly, and all is well until some glitches appear. She becomes more lucid: the man in front of her was not her boyfriend, let alone a real human. After some time, the presentiment turns into disillusionment, culminating into a scene at a cliff: the fake boyfriend was uncharacteristically unafraid of heights, which completely shattered the girlfriend’s illusion. When she confronted the android about it, he conformed to the girlfriend’s mental image and acquired acrophobia. The episode ends with the girlfriend’s tortured screams—clearly indicating that we ought to fear the robot.

Both Meeting You and Be Right Back use intentionally flawed and imperfect technology. All parties understand that computer characters cannot completely copy human personality because they will always need more information. What caused the girlfriend’s alarm was the uncanniness of the character: the closeness to the real boyfriend in conjunction with his very artificial quality. Similarly, the mother always knew that the daughter was fake, as the designers were deliberately preventing illusion. This unspoken agreement goes a long way and embeds itself into the story and the users’ reactions. The contract prevents the suspension of disbelief that more pessimistic narratives lean into.

But what if the images were eventually generated from pure memory? These characters would then become exact projections of the person as remembered, and the cracks will stop showing. The contract then is impossible to maintain, since the bereaved have no way of understanding that the person in front them is a hologram. Dystopias set later than Black Mirror’s near future presume a better technology that would situate the revived character on the other side of the uncanny valley. What we then need to look at is not the specific merits and demerits of the technology, which is relatively innocuous today. Rather, we need to ask whether our moral outrage will be preserved if virtual reality were to be perfected. In other words, what if we did not know we were in the simulation? There is no disillusion anymore—we truly believe we are reunited with our beloved, and we will die before they do.

What these reunions give is a painless and desirable cure for grief. Most of us like to think that the human experience is only a worthy one if loss and anger come with love, but we have all faced to varying degrees the temptation to escape anguish. What dystopian VR promises is a free and painless escape, one only fathomable with better technology and severe bereavement. Once virtual reality has its singularity moment, we will be facing a completely different monster. The debate will then become one of utility: will the calculus choose removing suffering or removing reality?

One of the most significant (and perhaps heavy-handed) modern treatments of this quandary is in the novel A Canticle for Leibowitz: where the doctor (clearly the stand in for a scientific utilitarian) declares “Pain is the only evil I know about.” Ultimately, it was never about Virtual Reality as a technology. The debate is another chapter in a long (and perhaps never-ending) series that asks about what makes a life worth living. The question being discussed is much older: “is pain worth eliminating at any cost?” All dilemmas revolving around new technologies follow the same sort of moral prototypes: they are evolved forms of current medias with slightly more potency and shine, giving us the illusion that the problems they pose are new.

What we need to do instead to answer this and other similar ethics issues is to simply pose the questions that others have posed for centuries, and work from there. We can apply this reasoning to any form of technology: A Canticle for Leibowitz has much to say about VR, much like Frankenstein on artificial intelligence, or the myth of Icarus on transhumanism.

Much like the monks of the Albertian Order of St. Leibowitz, we have an incomplete understanding of the technology around us. Throughout the years, most of us have collected disparate pieces of information from here and there, to eventually build a picture that is nowhere near accurate. We let ourselves be guided by people who, for one reason or another, are over- or underselling the technology’s significance. From there, we begin to form opinions and cherish our flawed memorabilia. We are prone to being influenced by fiction writers and technology journalists because consuming information is easier than synthesizing it. Furthermore, technical people have acquired a new authority that allows much of their (often insufficiently examined) efforts to go undeterred and unquestioned.

Ultimately, this anxiety we experience over dissociation is one related to suffering and its place in human lives. Are we obligated to feel pain even if presented with the option not to? The “experience machine” might soon vanish from the horizon of science fiction, and we might have to face it as soon as tomorrow.

EDITORIAL NOTE: The author wishes to thank The Veritas Forum for making this essay possible with their support.